Meta has introduced a revolutionary AI system that can rapidly interpret visual information from the human brain. This system records thousands of measurements of brain activity each second and reconstructs the way our minds perceive and analyze images. The research paper describes these findings as a significant advancement in the real-time decoding of the ongoing visual processes within the human brain.

The technique leverages magnetoencephalography (MEG) to provide a real-time visual representation of thoughts.

What Does MEG Stand For and How Does the AI System Operate?

MEG is a non-invasive neuroimaging technique that measures the magnetic fields produced by neuronal activity in the brain. By capturing these magnetic signals, MEG provides a window into brain function, allowing researchers to study and map brain activity with high temporal resolution.

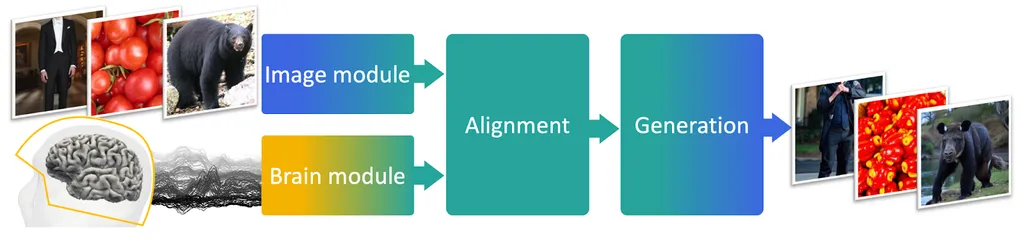

The AI system comprises three key components:

- Image Encoder: This component transforms an image into a set of representations that the AI can comprehend and process independently of the brain. It essentially dissects the image into a format suitable for AI analysis.

- Brain Encoder: Acting as an intermediary, this component aligns MEG signals with the image embeddings created by the Image Encoder, linking the brain’s activity with the image’s representation.

- Image Decoder: The last component is tasked with producing a credible image based on the brain’s representations. It takes the processed data and recreates an image that reflects the initial thought.

Meta trained this architecture using a publicly available dataset of MEG recordings obtained from healthy volunteers, made accessible by Things, an international consortium of academic researchers who share experimental data based on the same image database.

To start, Meta compared the decoding performance achieved with various pre-trained image models and found that the brain signals were most compatible with modern computer vision AI systems, such as DINOv2, a recent self-supervised architecture capable of learning intricate visual representations without human annotations. This outcome confirms that self-supervised learning encourages AI systems to acquire brain-like representations: The artificial neurons in the algorithm tend to respond similarly to the brain’s physical neurons when exposed to the same image.

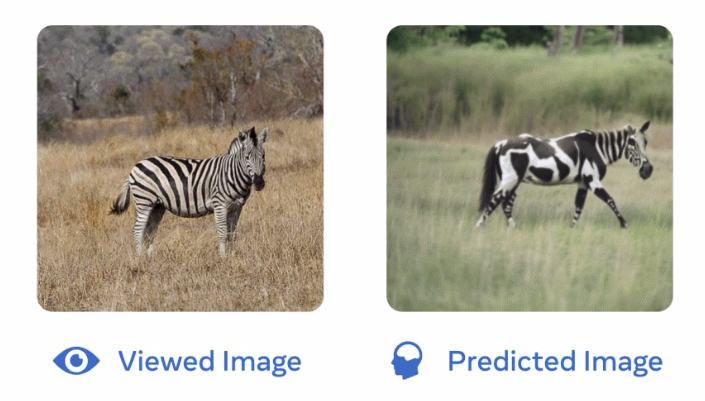

This functional alignment between these AI systems and the brain is then used to guide the creation of an image resembling what participants observe during brain scanning. While Meta’s results indicate that functional Magnetic Resonance Imaging (fMRI) is more effective at image decoding, Meta’s MEG decoder can be applied continuously, producing a steady stream of images decoded from brain activity.

Although the generated images are not perfect, the findings imply that the reconstructed images retain a rich set of high-level features, like object categories. Nevertheless, the AI system often introduces inaccuracies in low-level features, such as the misplacement or misalignment of objects in the generated images. In particular, using the Natural Scene Dataset, Meta demonstrate that MEG-based decoding yields less precise results compared to fMRI, which is a slower but more spatially accurate neuroimaging technique.

Overall, Meta’s research demonstrates that MEG can be employed to decipher complex representations emerging in the brain with millisecond precision. This work aligns with Meta’s ongoing research initiative to understand the fundamentals of human intelligence, highlighting its similarities and differences in comparison to current machine learning algorithms, and ultimately guiding the development of AI systems intended to learn and reason in a manner similar to humans.

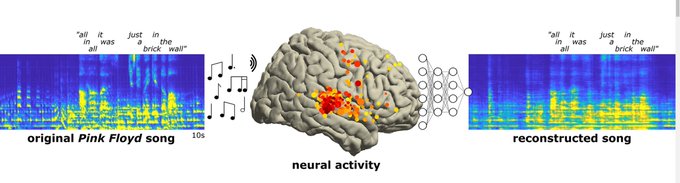

Meta’s latest innovation isn’t the only recent advancement in the realm of mind-reading AI. As reported by Decrypt, a recent study led by the University of California at Berkeley showcased the ability of AI to recreate music by scanning brain activity.

In that experiment, participants thought about Pink Floyd’s “Another Brick in the Wall,” and the AI was able to generate audio resembling the song using only data from the brain.

Furthermore, advancements in AI and neurotechnology have led to life-changing applications for individuals with physical disabilities. A recent report highlighted a medical team’s success in implanting microchips in a quadriplegic man’s brain. Using AI, they were able to “relink” his brain to his body and spinal cord, restoring sensation and movement. Such breakthroughs hint at the transformative potential of AI in healthcare and rehabilitation.

The potential applications of such technology are vast, from enhancing virtual reality experiences to potentially aiding those who have lost their ability to speak due to brain injuries.

AI’s Potential & Pitfalls

However, it’s crucial to approach these advancements with a balanced viewpoint. The Meta researchers pointed out that while the MEG decoder is fast, it doesn’t consistently produce precise images. The images it generates primarily capture broad aspects of the observed image, such as object categories, but may lack specific details.

The implications of this technology are profound. Beyond its immediate applications, comprehending the underpinnings of human intelligence and crafting AI systems that emulate human thinking could redefine our interaction with technology.

The researchers issued a warning about the ethical considerations arising from these rapid technological advancements, particularly emphasizing the need to safeguard mental privacy. In the end, although AI can now translate our thoughts into images, it’s our responsibility to ensure that the canvas of our minds remains under our control.

Sources :

https://decrypt.co/202258/meta-has-an-ai-that-can-read-your-mind-and-draw-your-thoughts